In today’s AI-driven world, you need to stay ahead of emerging state laws and federal regulations that protect consumer data and enforce transparency. Implement privacy-by-design, regularly assess risks, and keep detailed records to guarantee compliance. Knowing your obligations helps you avoid penalties and build trust. Staying informed about legal developments and adopting responsible AI practices will guide you through the complex data protection landscape—more insights await to help you navigate it confidently.

Key Takeaways

- Stay informed on evolving state and federal privacy laws to ensure compliance across jurisdictions.

- Conduct comprehensive data inventories and implement privacy-by-design to protect consumer data.

- Perform regular AI risk assessments and transparency reports to identify biases and demonstrate accountability.

- Embed security measures like encryption and access controls to prevent data breaches and legal liabilities.

- Train teams on legal updates, ethical standards, and enforcement trends for a proactive privacy management approach.

acer Aspire Go 15 AI Ready Laptop | 15.6" FHD (1920 x 1080) IPS Display | Intel Core 3 Processor N355 | Intel Graphics | 8GB DDR5 | 128GB UFS | Wi-Fi 6 | Windows 11 Home in S Mode | AG15-32P-39R2

Exceptional Performance and Productivity: Experience smooth and responsive performance powered by a new, more powerful 8-Core Intel Core...

As an affiliate, we earn on qualifying purchases.

Emerging State Privacy Regulations and Their Impact

As more states implement their own privacy laws in 2025, the landscape of data protection is becoming increasingly complex and fragmented. You’ll need to navigate a patchwork of regulations, each with different rights and obligations. States like Delaware, Iowa, Nebraska, New Hampshire, New Jersey, Tennessee, Minnesota, and Maryland have enacted laws granting consumers rights to access, question, and control their data. Some laws require transparency around AI decisions, risk assessments, and data security measures. This means organizations must adapt quickly to comply with diverse requirements, often implementing new data governance practices. As a result, your organization faces increased compliance complexity, requiring continuous monitoring of evolving state laws and proactive adjustments to privacy policies and procedures to avoid penalties and build consumer trust.

acer Aspire 14 AI Copilot+ PC | 14" WUXGA Display | Intel Core Ultra 5 Processor 226V | NPU: Up to 40 Tops - GPU: Up to 53 Tops | Intel ARC 130V | 16GB LPDDR5X | 512GB SSD | Wi-Fi 6E | A14-52M-51S1

It's possible on your Intel AI PC - Equipped with an Intel Core Ultra 5 processor (Series 2),...

As an affiliate, we earn on qualifying purchases.

Federal AI Legislation and Consumer Rights Protections

Federal AI legislation in 2025 is shaping the landscape of consumer rights by establishing clear transparency and accountability standards for high-risk AI systems. You’ll see proposals requiring companies to provide notice, explanations, and options to opt out of AI-driven decisions that considerably affect you. Legislation like the AI Research Innovation and Accountability Act emphasizes transparency reports and security protocols, ensuring organizations disclose how AI systems operate in critical areas. Consumer rights are expanding to include the ability to challenge automated decisions and request corrections. Additionally, this legislation encourages the development of trustworthy AI practices, which is vital in building consumer confidence and ensuring ethical deployment of AI technologies. Federal proposals also empower NIST to develop sector-specific guidelines, helping you better understand how AI impacts your privacy and rights. These laws aim to balance innovation with protections, giving you more control and clarity over AI’s role in your daily life. Additionally, ongoing research into AI vulnerabilities highlights the importance of establishing robust safety measures and monitoring systems to protect consumers from potential harms. Developing security protocols and continuous monitoring can further mitigate risks associated with AI deployment.

HP 14" Laptop, Intel Processor N150 (Beat i3) 16GB RAM 256GB Storage(128GB UFS + 128GB SD Card) 1-Year Office 365 Copilot AI Win11 WiFi6 Laptops-Computer for Business Student w/GM Accessory

【16GB RAM to Support Diverse Workflows】The computer notebook comes with 16GB RAM, designed to support the diverse workflows...

As an affiliate, we earn on qualifying purchases.

Organizational Strategies for Ensuring Data Privacy and Compliance

How can organizations effectively safeguard data privacy and guarantee compliance amid evolving legal requirements? You need a proactive, layered approach. Start with thorough data inventories to know what you hold, where it is, and how it’s used. Implement privacy-by-design principles, embedding security and transparency into every system. Regularly conduct risk assessments, especially for high-risk AI applications. Train your team on legal updates and ethical standards. Establish clear vendor policies to monitor third-party AI tools. Use the following table to understand your emotional landscape:

| Challenge | Solution |

|---|---|

| Fear of non-compliance | Continuous monitoring and audits |

| Data breaches and leaks | Robust encryption and access controls |

| Public mistrust | Transparent communication and accountability |

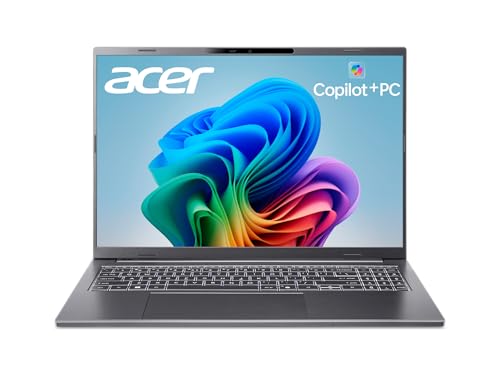

acer Aspire 16 AI Copilot+ PC | 16" WUXGA 120Hz Multi-Touch Display | Snapdragon X X1-26-100 | NPU: 45 Tops - GPU: Up to 1.7 TFLOPs | 16GB LPDDR5X | 512GB PCIe Gen 4 SSD | Wi-Fi 7 | A16-11MT-X669

Step Up to Next-Level Performance - Redefine your laptop experience with the Acer Aspire 16 AI. Powered by...

As an affiliate, we earn on qualifying purchases.

The Role of AI Transparency and Risk Assessments

Have you considered how transparency and risk assessments are essential to trustworthy AI deployment? Transparency ensures stakeholders understand how AI systems make decisions, fostering trust and accountability. Risk assessments identify potential harms, biases, or safety issues before deployment, allowing you to mitigate them proactively. By documenting AI processes and outcomes, you can demonstrate compliance with evolving laws and regulations. Regular evaluations help uncover unintended consequences and improve system fairness. Implementing transparent practices and thorough risk assessments also supports ethical AI use, reducing legal liabilities and public backlash. For example, AI regulation emphasizes the importance of accountability and oversight in AI systems. In today’s regulatory landscape, these measures aren’t optional—they’re critical to building responsible AI that respects privacy rights and safeguards users. Technical factors like Contrast ratios and other design considerations can influence the effectiveness of AI transparency initiatives. Ensuring that AI systems are explainability-focused is crucial for fostering stakeholder confidence and maintaining ethical standards. Incorporating best practices from the field of data protection can further enhance trust and compliance. Prioritizing transparency and risk assessments positions you as a trustworthy organization committed to ethical AI deployment.

Navigating Enforcement and Legal Challenges in AI Data Management

Managing enforcement and legal challenges in AI data management requires a proactive approach to compliance, as regulators are increasingly scrutinizing how organizations handle personal data. You must stay ahead by conducting thorough data inventories and implementing privacy-by-design principles. Regular audits help identify potential violations, while clear documentation supports compliance efforts. Staying informed about evolving laws and enforcement actions is essential, especially as states expand privacy statutes and federal proposals aim for transparency and accountability. Additionally, understanding the importance of data security measures can help prevent breaches that lead to legal consequences. Incorporating privacy regulations into your organizational processes ensures alignment with legal expectations and reduces the risk of non-compliance. Developing a comprehensive risk management strategy can further safeguard your organization against emerging legal threats. Engaging in compliance training programs can enhance organizational awareness and promote a culture of privacy. Leveraging insights from Hackathons – Hack’n Jill can foster innovative solutions to data security and privacy challenges within your organization.

Frequently Asked Questions

How Do Emerging State Laws Affect Multinational AI Companies?

Emerging state laws substantially impact your multinational AI company by increasing compliance complexity across jurisdictions. You’ll need to adapt your data practices to meet diverse privacy requirements, such as consumer rights, risk assessments, and transparency obligations. These laws may require implementing privacy-by-design, conducting regular audits, and modifying AI systems to avoid unlawful discrimination or biometric misuse. Staying proactive helps you navigate legal risks, guarantee compliance, and maintain consumer trust across all regions.

What Are the Penalties for Non-Compliance With Ai-Specific Privacy Laws?

If you don’t comply with AI-specific privacy laws, you could face hefty fines, enforcement actions, and lawsuits. Regulatory agencies may impose sanctions, mandate corrective measures, or require audits. State laws like Minnesota’s give consumers rights to challenge automated decisions, and violations could lead to penalties. Non-compliance risks reputational damage, legal liabilities, and increased scrutiny, so it’s vital to implement robust data governance and privacy-by-design practices to stay compliant.

How Can Small Businesses Implement AI Privacy Safeguards Effectively?

Think of implementing AI privacy safeguards like building a fortress—you need strong walls and a clear blueprint. As a small business, start by conducting a data inventory to know what personal info you handle. Implement privacy-by-design principles, guarantee vendor compliance, and stay updated on state laws. Use transparent practices, offer consumers options to opt out, and regularly review your systems for fairness and safety. This approach keeps your data secure and your reputation intact.

What Is the Role of Consumer Consent in Ai-Driven Data Collection?

Consumer consent is vital in AI-driven data collection because it guarantees you’re respecting individuals’ privacy rights. You should clearly inform users about what data you collect, how you’ll use it, and obtain their explicit permission before gathering sensitive or personal data. By doing so, you build trust, comply with legal requirements, and reduce your risk of enforcement actions. Always provide options for users to opt out or withdraw consent easily.

How Will Future Federal Legislation Influence AI Innovation and Privacy Balance?

Future federal legislation aims to strike a balance between fostering AI innovation and protecting privacy. You’ll need to comply with transparency, accountability, and security standards, especially for high-risk AI systems. While promoting innovation through frameworks like NIST standards and regulatory sandboxes, laws will also enforce consumer rights, mandate risk assessments, and require organizations to adopt privacy-by-design practices. This dual approach encourages responsible AI growth without compromising individual privacy rights.

Conclusion

While steering AI and privacy laws can seem overwhelming, embracing these regulations helps protect your organization and build trust with users. Staying proactive with compliance and transparency isn’t just legal—it’s a competitive advantage. Don’t let fear of complexity hold you back; with the right strategies, you can turn data privacy into a strength. Embrace these changes confidently, knowing you’re safeguarding your reputation and fostering responsible AI innovation.