As the protector of your data, I act as the guardian of your valuable information, always on alert for the constantly changing cyber threats that exist in the online world.

In this age of uncertainty, artificial intelligence (AI) security emerges as a formidable ally, fortifying our defenses and ushering in a new era of data protection.

Join me on a journey as we explore the pivotal role of AI security in safeguarding your most valuable asset – your data.

Together, let’s reimagine safety in the face of relentless adversaries.

Key Takeaways

- Cyber threats have rapidly evolved due to advancements in technology, creating opportunities for cybercriminals to carry out sophisticated phishing attacks, ransomware, and data breaches that significantly impact privacy.

- AI plays a pivotal role in detecting and mitigating these threats by leveraging machine learning algorithms and predictive analytics to enhance the speed and accuracy of threat detection and enable immediate action against threats.

- AI security measures enhance data protection strategies by identifying vulnerabilities in encryption algorithms, developing more robust methods, and implementing advanced authentication techniques such as biometrics and multi-factor authentication.

- AI security systems provide real-time monitoring, threat detection, and predictive analytics, actively safeguarding data and strengthening cybersecurity defenses in the face of evolving threats.

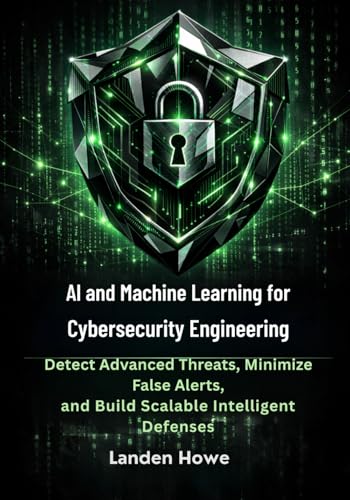

AI and Machine Learning for Cybersecurity Engineering: Detect Advanced Threats, Minimize False Alerts, and Build Scalable Intelligent Defenses

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Evolution of Cyber Threats

As an AI security specialist, my team and I’ve witnessed the rapid evolution of cyber threats. With the emergence of new technologies, the landscape of cybersecurity has become more complex and challenging.

These advancements, while offering numerous benefits, also present significant risks to individual privacy. The increasing interconnectedness of devices and the vast amount of data being generated have created opportunities for cybercriminals to exploit vulnerabilities and compromise personal information.

From sophisticated phishing attacks to ransomware and data breaches, the impact on privacy can’t be underestimated.

As AI continues to evolve, it plays a pivotal role in detecting and mitigating these threats. By leveraging machine learning algorithms and predictive analytics, AI security solutions have the potential to proactively identify and respond to emerging cyber threats, safeguarding our privacy in this ever-changing digital landscape.

Yubico – YubiKey 5C NFC – Multi-Factor authentication (MFA) Security Key and passkey, Connect via USB-C or NFC, FIDO Certified – Protect Your Online Accounts

POWERFUL SECURITY KEY: The YubiKey 5C NFC is the most versatile physical passkey, protecting your digital life from…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Understanding AI Security

With the rapid evolution of cyber threats, it’s crucial to have a clear understanding of AI security and its pivotal role in protecting your data. AI security challenges are becoming increasingly complex as hackers find new ways to breach systems and exploit vulnerabilities. To address these challenges, organizations must recognize the importance of AI in cybersecurity and leverage its capabilities to enhance their defense mechanisms.

Here are three key points to consider:

- AI-powered threat detection: AI algorithms can analyze vast amounts of data in real-time, enabling the identification of suspicious patterns and anomalies that may indicate a cyber attack. This proactive approach enhances the speed and accuracy of threat detection, minimizing the risk of data breaches.

- Intelligent response automation: AI can automate incident response processes, allowing for immediate action to be taken against threats. By leveraging machine learning techniques, organizations can develop intelligent systems that can autonomously respond to security incidents, mitigating the potential impact on data privacy and integrity.

- Adaptive security measures: AI can continuously learn and adapt to evolving threats, making it a powerful tool in maintaining robust cybersecurity. By leveraging AI, organizations can stay one step ahead of attackers by implementing dynamic security measures that can quickly adapt to changing threat landscapes.

Understanding AI security is crucial in today’s digital landscape. By harnessing the power of AI, organizations can strengthen their cybersecurity defenses and protect their valuable data from increasingly sophisticated cyber threats.

Biometric Security Systems A Complete Guide – 2023 Edition

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Enhancing Data Protection Strategies

My data protection strategy’s effectiveness can be enhanced by incorporating AI security measures.

One key aspect of data protection is the use of data encryption techniques. Encryption ensures that sensitive information is transformed into unreadable cipher text, making it difficult for unauthorized individuals to access or decipher the data. AI can play a crucial role in identifying vulnerabilities in encryption algorithms and developing more robust encryption methods to safeguard data.

Another important element is user authentication methods. AI can help in implementing advanced authentication techniques such as biometrics, multi-factor authentication, and behavioral analysis to verify the identity of users accessing the data.

By incorporating AI security measures, my data protection strategy can become more resilient, adaptive, and proactive in defending against potential threats. With these enhanced strategies in place, my data will be better protected from unauthorized access and potential breaches.

Transitioning into the next section about ‘AI security in action’, let’s now explore some real-world examples of how AI is revolutionizing data protection.

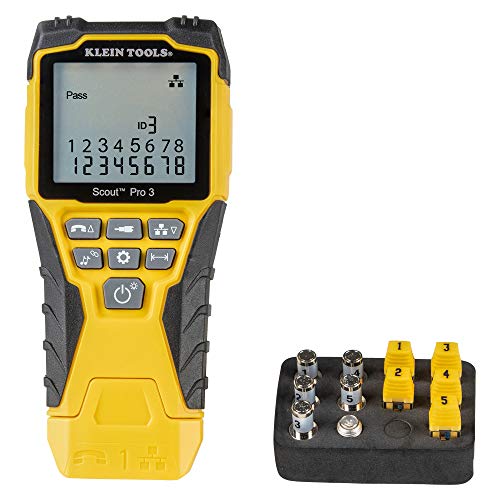

Klein Tools VDV501-851 Cable Tester Kit with Scout Pro 3 for Ethernet / Data, Coax / Video and Phone Cables, 5 Locator Remotes

VERSATILE CABLE TESTING: Cable tester tests voice (RJ11/12), data (RJ45), and video (coax F-connector) terminated cables, providing clear…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

AI Security in Action

To illustrate the practical applications of AI security, let’s explore real-world examples of how this technology is revolutionizing data protection.

- Real-time monitoring: AI security systems have the capability to constantly monitor network traffic, data access, and user behavior in real time. This ensures that any suspicious activity or potential threats are detected immediately, allowing for swift action to be taken.

- Threat detection: AI algorithms are trained to analyze vast amounts of data and identify patterns that indicate potential security threats. By leveraging machine learning and deep learning techniques, AI security systems can detect even the most sophisticated attacks, including zero-day vulnerabilities, malware, and advanced persistent threats.

- Predictive analytics: AI security solutions can also use predictive analytics to anticipate future threats based on historical data and trends. By analyzing patterns and anomalies, AI systems can proactively identify potential vulnerabilities and weaknesses in the network, helping organizations take preemptive measures to strengthen their security posture.

These examples demonstrate how AI security is actively safeguarding data by providing real-time monitoring, advanced threat detection, and predictive analytics capabilities. With AI at the forefront, organizations can stay one step ahead in the ever-evolving landscape of cybersecurity.

The Future of Cybersecurity

As we delve into the future of cybersecurity, let’s envision how AI security will continue to evolve and shape the landscape of data protection. The rapid advancements in cybersecurity have led to the emergence of artificial intelligence as a powerful tool in safeguarding sensitive information. AI has the potential to revolutionize the way we protect data by automating threat detection, response, and prevention processes. Its ability to analyze vast amounts of data in real-time allows for more accurate identification of potential threats and vulnerabilities. Moreover, AI can continuously learn from new cyber threats, adapting its algorithms to stay one step ahead of malicious actors. The impact of AI in data protection cannot be underestimated, as it enhances the effectiveness and efficiency of traditional security measures, ultimately ensuring the confidentiality, integrity, and availability of critical information.

| Cybersecurity Advancements | Impact of AI in Data Protection |

|---|---|

| Advanced threat detection | Automates threat detection processes, reducing response time and minimizing the risk of data breaches |

| Real-time monitoring | Analyzes vast amounts of data in real-time, enabling faster identification of potential threats |

| Adaptive algorithms | Learns from new cyber threats, continually improving security measures to stay ahead of attackers |

| Enhanced incident response | Automates response processes, enabling quick and effective mitigation of security incidents |

| Proactive vulnerability management | Identifies and addresses vulnerabilities in systems and networks before they can be exploited |

Frequently Asked Questions

What Are the Common Types of Cyber Threats That Individuals and Organizations Face Today?

Social engineering attacks and ransomware attacks are common cyber threats faced by individuals and organizations today. They exploit human vulnerabilities and data encryption weaknesses, causing significant financial and reputational damage.

How Does AI Security Differ From Traditional Cybersecurity Methods?

AI security differs from traditional cybersecurity methods by leveraging the power of artificial intelligence to detect and mitigate threats in real-time. Its advantages include enhanced threat detection accuracy, faster response times, and the ability to analyze vast amounts of data for proactive defense.

What Are Some Best Practices for Implementing Data Protection Strategies?

Implementing data protection strategies requires careful consideration of best practices. Data encryption and access control are essential components. By utilizing these techniques, organizations can safeguard their valuable data and mitigate the risk of unauthorized access or breaches.

Can You Provide Examples of Real-World Scenarios Where AI Security Has Successfully Prevented Data Breaches?

In real-world scenarios, AI security has successfully prevented data breaches. Its ability to analyze vast amounts of data, detect anomalies, and respond in real-time has had a significant impact on the future of cybersecurity.

What Emerging Technologies or Trends Are Expected to Shape the Future of Cybersecurity?

Emerging technologies like quantum encryption and biometric authentication are revolutionizing cybersecurity. These advancements offer unprecedented levels of protection for our data, ensuring that unauthorized access becomes virtually impossible. The future of cybersecurity is bright.

Conclusion

In conclusion, the pivotal role of AI security in protecting our data is undeniable.

As cyber threats continue to evolve, it’s crucial to understand the power of AI in enhancing data protection strategies.

By harnessing the potential of AI security, we can actively combat and deter malicious attacks.

The future of cybersecurity lies in the hands of AI, as it unravels the intricate web of threats, ensuring the safety and integrity of our valuable information.