To guarantee AI in law is fair and unbiased, you need to tackle data bias by using diverse, representative datasets and continually auditing algorithms for fairness. Transparency and community engagement are essential to build trust and prevent unfair treatment. Implementing clear governance, oversight, and accountability measures helps to address systemic inequalities. If you keep exploring, you’ll discover effective strategies to make justice-driven AI systems both ethical and equitable for everyone involved.

Key Takeaways

- Use diverse, representative datasets to minimize biases and ensure fair treatment across all communities.

- Implement transparent algorithms and regular audits to detect and correct biases in AI systems.

- Engage stakeholders and affected communities in policy development and decision-making processes.

- Establish legal frameworks and oversight mechanisms to enforce accountability and fairness in AI applications.

- Promote continuous research and development of fairness metrics tailored to justice and law enforcement contexts.

![Bias and Fairness: Ensuring AI in Law Is Just and Unbiased 3 MixPad Free Multitrack Recording Studio and Music Mixing Software [Download]](https://m.media-amazon.com/images/I/71ltIxIuz1L._SL500_.jpg)

MixPad Free Multitrack Recording Studio and Music Mixing Software [Download]

Create a mix using audio, music and voice tracks and recordings.

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Understanding the Roots of Bias in AI Systems

Bias in AI systems often originates from the data used to train them, reflecting existing societal inequalities and discriminatory practices. When training data contains historical prejudices or underrepresents certain groups, AI models learn and perpetuate these biases. For instance, if crime data reflects systemic racism, the AI will associate certain neighborhoods or populations with higher criminality, reinforcing stereotypes. Developers may unintentionally embed bias through design choices, especially if they lack diverse perspectives or expertise in ethics. Additionally, biased or incomplete datasets skew AI outputs, leading to unfair treatment of marginalized communities. Without careful scrutiny and correction, these biases become embedded, making AI systems appear neutral while actually perpetuating discrimination. Recognizing the origins of bias helps you understand why these issues persist and how to address them. Moreover, implementing bias mitigation techniques during development can significantly reduce the impact of these ingrained prejudices. Understanding the importance of regional legal resources can also help identify how local laws and societal factors influence AI fairness in specific jurisdictions. Incorporating diverse training data can further help in reducing biases and promoting fairness. Furthermore, ongoing evaluation and community engagement are essential to ensure that AI maintains ethical standards and aligns with societal values.

Jeimier 5 Sizes Bias Tape Makers, Upgraded Bias Binding Tape Making Tool for Fabric Quilting Sewing, Quickly Customize, Solidly Bias Quilting Tool, 1/4IN 3/8IN 1/2IN 3/4IN 1IN

QUICKLY MAKE BIAS BINDING: The Jeimier 5 sizes professional Bias Tape Makers out of any fabric to match…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

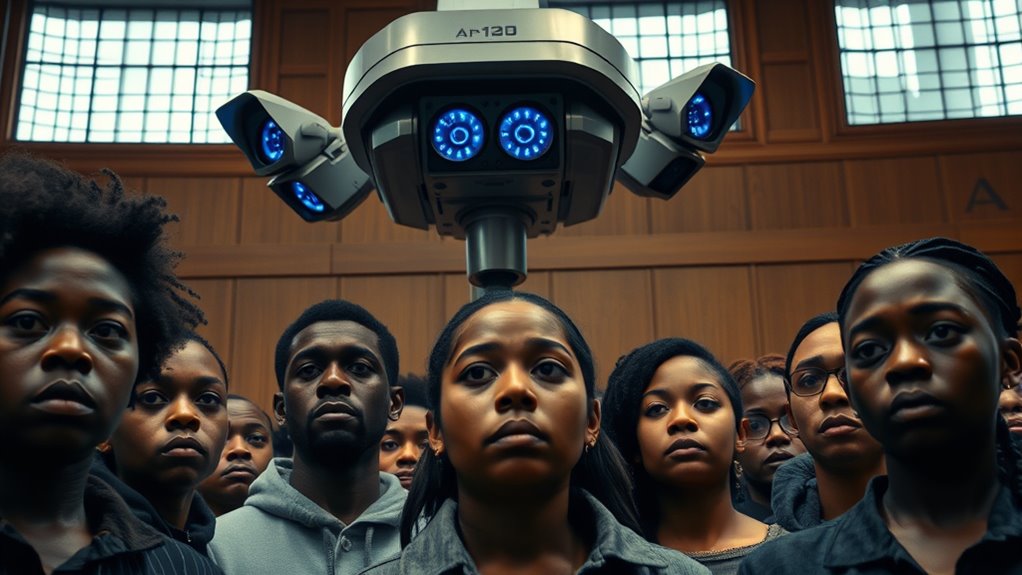

The Disproportionate Impact on Marginalized Communities

You need to recognize how AI tools in law enforcement often target marginalized communities more harshly than others. These systems can lead to over-policing and harsher sentences for Black and Brown individuals, reinforcing existing disparities. As a result, AI can widen justice gaps instead of closing them. Additionally, the potential for bias in algorithms underscores the importance of scrutinizing AI systems for fairness and accuracy. Without proper oversight, these biases can become embedded in the algorithmic decision-making, further perpetuating inequities. Developing cultural intelligence can help stakeholders better understand and address these complex issues of bias and fairness. Recognizing the disproportionate impact on marginalized groups is essential to creating more equitable AI applications in the legal system. Incorporating algorithmic fairness principles is crucial to mitigate these risks and promote equitable justice.

Over-Policing Minorities

Over-policing of marginalized communities has become a concerning consequence of AI-driven law enforcement tools. These systems often target minority neighborhoods more heavily, based on biased predictive policing algorithms and flawed data. You may not realize that AI models trained on historical crime data reflect systemic racism, leading to increased surveillance and police presence in these areas. Facial recognition errors disproportionately affect people of color, resulting in wrongful stops and arrests. This cycle deepens mistrust between communities and law enforcement while escalating criminalization. Without transparency or oversight, these tools reinforce existing disparities rather than reduce them. You need policies that address bias, ensure accountability, and limit over-policing. Only then can AI serve justice fairly, rather than perpetuating inequality and harm marginalized populations.

Increased Justice Disparities

How does the use of AI in law enforcement deepen existing inequalities? It often targets marginalized communities unfairly, reinforcing systemic disparities. AI tools, trained on biased data, lead to harsher sentences, wrongful arrests, and increased surveillance of Black and Brown populations. These algorithms can distort justice by prioritizing certain groups over others without accountability. Consider:

- Biased data causes disproportionate targeting, skewing outcomes.

- Facial recognition errors are 10 to 100 times higher for minorities.

- Lack of transparency prevents communities from challenging unfair decisions.

- The lack of equitable data representation in training datasets exacerbates these issues.

Furthermore, the reliance on algorithmic fairness metrics often fails to account for historical biases embedded within the data, perpetuating injustices.

This cycle worsens disparities, making it harder for marginalized groups to access fair treatment. Without proper oversight, AI risks perpetuating injustice rather than correcting it, deepening the inequality that already exists in the criminal justice system.

Transparency in Social Media: Tools, Methods and Algorithms for Mediating Online Interactions (Computational Social Sciences)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Challenges in Data Collection and Model Training

When collecting data for legal AI models, you often encounter biases embedded in your sources, which can skew results and reinforce existing inequalities. Incomplete demographic data makes it hard to guarantee fair treatment across different groups, leading to biased outcomes. Limited or unrepresentative training data further hampers your ability to develop accurate, unbiased models that serve all communities fairly. Additionally, the presence of diverse designs and materials in various datasets can influence the representation and perception of different groups within the training process. Recognizing the importance of ethical data practices is essential to mitigate these issues and promote fairness in AI applications. Incorporating mindfulness in data collection can help identify and address potential biases more effectively, ensuring a more balanced approach. For example, understanding cultural context can improve the inclusivity of the data you gather and help prevent inadvertent discrimination.

Data Source Biases

Data source biases pose a significant challenge in developing fair AI systems for law enforcement. If your training data isn’t representative or contains historical prejudices, your AI will inherit and amplify these biases. This can lead to unfair targeting and misidentification of certain groups. To understand this better, consider these key issues:

- The data often reflect systemic racism and past injustices, skewing results.

- Incomplete or unbalanced datasets exclude diverse populations, reducing accuracy.

- Design choices by developers lacking ethical insight can embed biases unknowingly.

- Recognizing the influence of training data quality is crucial for addressing bias.

- Addressing systemic biases in data collection processes is essential to improve fairness.

- Employing data-driven strategies can help mitigate the impact of biased data by identifying patterns and correcting disparities. Additionally, understanding the training data collection process can reveal sources of bias and help in developing more balanced datasets. Incorporating bias mitigation techniques into model training can further reduce unfair outcomes and promote equitable law enforcement practices.

These biases are hidden in the data you train on, making it essential to scrutinize your sources carefully. Without addressing them, your AI risks perpetuating discrimination and undermining fairness in law enforcement.

Incomplete Demographic Data

Incomplete demographic data presents a significant obstacle in developing fair AI systems for law enforcement because accurate and all-encompassing information about individuals’ racial, ethnic, and socioeconomic backgrounds is often missing or difficult to obtain. Without comprehensive data, AI models struggle to identify and correct biases related to protected groups. This gap can lead to underrepresentation of marginalized populations, making algorithms less accurate and more discriminatory. You might unknowingly reinforce existing inequalities if the data used for training lacks diversity or completeness. Gathering full demographic information is challenging due to privacy concerns, reporting inconsistencies, and systemic barriers. Additionally, the lack of Vetted – Halloween Product Reviews can hinder efforts to improve model fairness by providing trustworthy feedback. Incorporating data analysis techniques that account for missing or incomplete data can help mitigate some biases, but these methods are not foolproof. For example, implementing imputation methods can fill in gaps, yet they depend heavily on the quality of available data. Furthermore, understanding the risk assessment involved in data collection processes is crucial to ensure ethical standards are maintained. As a result, AI systems may perpetuate or worsen biases, undermining fairness and trust in law enforcement’s use of technology.

Training Data Limitations

Training data limitations pose a significant challenge because the quality and representativeness of the data directly impact the fairness and accuracy of AI models in law enforcement. When the data is biased, incomplete, or unrepresentative, AI systems can perpetuate or worsen existing disparities. You must recognize that:

- The data often reflects historical biases, reinforcing systemic racism and discrimination.

- Skewed or insufficient data leads to inaccurate predictions and misidentifications.

- Developers may unintentionally embed bias through choices made during algorithm design.

Without diverse, balanced, and transparent data collection, AI tools risk unfair treatment of marginalized communities. Ensuring data quality is essential for building trustworthy, unbiased systems that serve justice rather than undermine it.

The End of Marketing: Humanizing Your Brand in the Age of Social Media and AI

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Role of Facial Recognition and Surveillance Technologies

Facial recognition and surveillance technologies have become integral tools in law enforcement, promising enhanced security and efficiency. However, their accuracy varies markedly across populations, risking wrongful identifications. Commercial systems misidentify darker-skinned faces at much higher rates than lighter-skinned ones, leading to potential injustices. These systems often operate without proper oversight, increasing the chance of rights violations.

| Technology Aspect | Impact |

|---|---|

| Error Rates | Up to 34.7% for darker-skinned women; 0.8% for light-skinned men |

| Racial Disparities | Consistent accuracy gaps across racial groups |

| Use in Surveillance | Mass rights violations and automation bias |

| Misidentification Risks | Wrongful arrests and prolonged investigations |

| Regulation & Oversight | Lack of regulation heightens misuse potential |

You must be aware of these issues to advocate for fair, transparent AI use.

Ethical and Legal Concerns in AI-Driven Policing

AI-driven policing tools raise significant ethical and legal questions that go beyond issues of accuracy and bias. These concerns involve fundamental rights like privacy, due process, and equal protection. You must consider:

- Transparency: How openly agencies disclose AI use influences accountability and public trust.

- Due Process: The challenge of challenging or understanding opaque “black box” algorithms that impact legal decisions.

- Oversight: The need for legal frameworks that regulate AI deployment, prevent misuse, and guarantee fair treatment.

Without proper oversight, AI tools risk violating constitutional rights, leading to wrongful arrests or discriminatory practices. As you implement these systems, balancing technological benefits with ethical responsibilities is crucial to uphold justice and public confidence.

Strategies for Promoting Transparency and Accountability

Promoting transparency and accountability in law enforcement AI systems requires implementing clear policies that make the use of these tools understandable to the public and stakeholders. You should advocate for open reporting on AI deployment, decision-making processes, and performance metrics. Regular audits and independent reviews help identify biases and ensure fairness. Community engagement fosters trust and provides valuable feedback. Consider this overview:

| Strategy | Action | Goal |

|---|---|---|

| Public disclosure | Share AI use policies openly | Build trust and awareness |

| Independent audits | Conduct third-party evaluations | Detect biases and errors |

| Stakeholder engagement | Involve community in oversight | Ensure accountability |

| Transparent algorithms | Use explainable AI models | Clarify decision processes |

These steps help create an environment of trust, responsibility, and ongoing improvement.

Implementing Governance Frameworks for Fair AI Use

Establishing effective governance frameworks is essential to guarantee that AI systems used in law enforcement operate fairly and responsibly. You need clear policies that define ethical standards, accountability, and transparency. Regular oversight ensures AI tools don’t perpetuate biases or cause harm. To build strong governance, consider these key steps:

Effective governance ensures fair, transparent AI in law enforcement through clear policies, oversight, and ongoing audits.

- Implement strict guidelines for data collection and model training to prevent biased inputs.

- Mandate human oversight in decision-making processes to catch errors and ensure fairness.

- Conduct ongoing audits and impact assessments to identify and address bias or misuse promptly.

Building Community Trust Through Oversight and Engagement

Building community trust requires meaningful oversight and active engagement with the public. You can foster trust by ensuring transparency about AI systems’ design, purpose, and limitations. Regularly involve community members, especially marginalized groups, in discussions about AI deployment, listening to their concerns and feedback. Public oversight committees, composed of diverse stakeholders, help hold agencies accountable and review AI use policies. Clear communication about how AI impacts communities builds confidence and dispels suspicion. Providing accessible information and avenues for grievances encourages ongoing dialogue. When communities feel heard and see that their voices influence decision-making, trust grows. This approach not only promotes fairness but also demonstrates your commitment to responsible AI use that respects rights and upholds justice.

Moving Towards Equitable and Responsible AI Policies

Achieving truly equitable and responsible AI policies requires a proactive approach that prioritizes fairness, transparency, and accountability. You must establish clear guidelines that address biases, ensure oversight, and promote community involvement. To do this effectively:

Proactively establish fairness, transparency, and accountability in AI policies through guidelines, oversight, and community engagement.

- Develop thorough regulations that mandate bias testing, transparency, and regular audits of AI systems.

- Incorporate diverse datasets and inclusive design practices to minimize systemic bias.

- Promote ongoing training for law enforcement and policymakers on ethical AI use and bias mitigation strategies.

Frequently Asked Questions

How Can Biases in AI Be Detected and Corrected Effectively?

You can detect and correct biases in AI by regularly auditing your models with diverse, representative data sets. Use fairness metrics to identify disparities, and involve multidisciplinary teams—including legal and ethical experts—to review outcomes. Implement transparency by documenting your algorithms’ decision-making processes, and incorporate human oversight to catch biased patterns. Continuously update your data and models based on feedback and new insights to guarantee ongoing fairness and accuracy.

What Legal Protections Exist for Individuals Wrongly Identified by AI Systems?

You might think protections are clear, but they’re often limited. When wrongly identified by AI, you can challenge the evidence through legal avenues like suppressing wrongful identification or arguing violations of your rights under due process. Some jurisdictions require transparency and disclosure about AI use, giving you a chance to question its accuracy. However, the legal landscape remains evolving, and securing remedy depends on jurisdiction and specific circumstances.

How Does AI Bias Differ Across Various Law Enforcement Agencies?

You’ll notice AI bias varies across law enforcement agencies due to differences in data quality, oversight, and policies. Some agencies may use more diverse training data and implement stricter checks, reducing bias. Others might lack transparency or fail to address systemic issues, leading to higher misidentification and discrimination. Your experience depends on the agency’s commitment to fairness, ethical standards, and ongoing evaluation of their AI tools.

What Role Do Private Companies Play in Developing Unbiased AI Tools?

Private companies develop AI tools that law enforcement agencies rely on, but their role in creating unbiased AI is complex. You need to examine their data sources, design choices, and testing procedures, as bias can inadvertently be embedded. Press them for transparency and accountability, and advocate for strict standards and regulations. By demanding ethical practices, you help ensure these tools serve justice fairly and reduce the risk of systemic bias.

How Can Community Members Influence AI Policy Decisions in Policing?

You can influence AI policy decisions in policing by actively participating in public hearings, community forums, and local government meetings. Don’t assume your voice doesn’t matter—advocate for transparency, oversight, and fairness. Organize with neighbors to push for regulations that prevent bias and protect rights. Your engagement holds agencies accountable and guarantees policies reflect community values, leading to fairer, more equitable AI use in law enforcement.

Conclusion

To make sure AI in law truly serves justice, you must see it as a delicate garden needing constant care. By tending to biases, engaging communities, and building transparent policies, you can nurture growth that’s fair and unbiased. Think of your efforts as watering this garden—each action helping justice blossom evenly for all. With dedication and vigilance, you can transform AI from a potential thorn into a tool that upholds fairness and equality.